Imagine having a manager for your website who helps you track if you are going in the right direction, see how many potential prospects visited your website for your business or if your ad campaigns are worth the investments?

That’s Google Analytics for you and the best part? He helps you for free if you are just starting out!

But Google Analytics also has an assistant whom we know as Bot. Bots help Google Analytics to automate tasks for Google Analytics so that it can provide useful analysis to you.

These bots can be categorized into two types:

- Good Bots – These bots are absolutely necessary for analysis, diagnosis and even for optimizing SEO for our website.

- Bad Bots – These boats are so named hamper our traffic analysis. Boats pretend as human users and skew our metrics, and hence spamming our user data.

Take the following situation in the picture: You have 100 clicks on your websites and only 10 of them buy the product. According to the analytics, the success percentage of your sales funnel is 10 per cent. But what if 50 of them are bots? That’s the actual result was supposed to be a success percentage of 20 per cent (50 clicks and 10 customers buy products), but because of bots, the result deviated by a margin of 10 per cent!

Quick Fact: About 38.2 per cent of all internet traffic is ruled by Bots.

Since from a business perspective, it’s necessary that we receive data only from Human traffic and not Bot traffic it becomes really essential to identify them and diminish their number in our traffic report.

How Can We Identify These Bots?

If you find any suspicion in your ecommerce analytics report, it might be bots

To identify these boats, you can follow the following steps on Google Analytics:

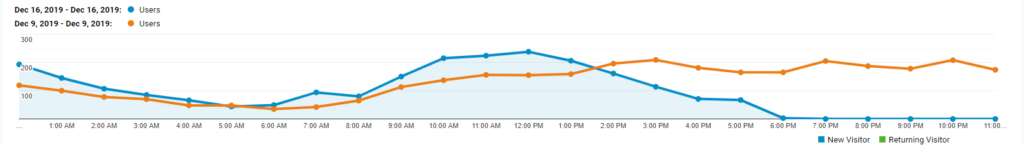

- Check for the spike in the numbers of the user visiting your site, if it seems unusual then there is a high probability it’s bot traffic.

- IGo to google analytics, the left-hand column clicks on Acquisition, then click all traffics and channels.

- Scroll down default channel grouping and you will find Referral, click it. If the referrals sources don’t look relevant to you, then there is a high chance that its bot traffic. Make sure to see all the referrals carefully.

- Now is the interesting part, check visits with 100 per cent bounce rate 00:00:00 average duration of the visit. If a legitimate user is visiting your site then the duration will definitely be more than 0! So, we can say for sure it’s bot traffic.

- Further, you can go to Ghost Referrer Spam. Click Secondary dimension and type hostname (the word). Any hostname listed which is not your website is bot traffic and should be removed immediately so that your report is more precise.

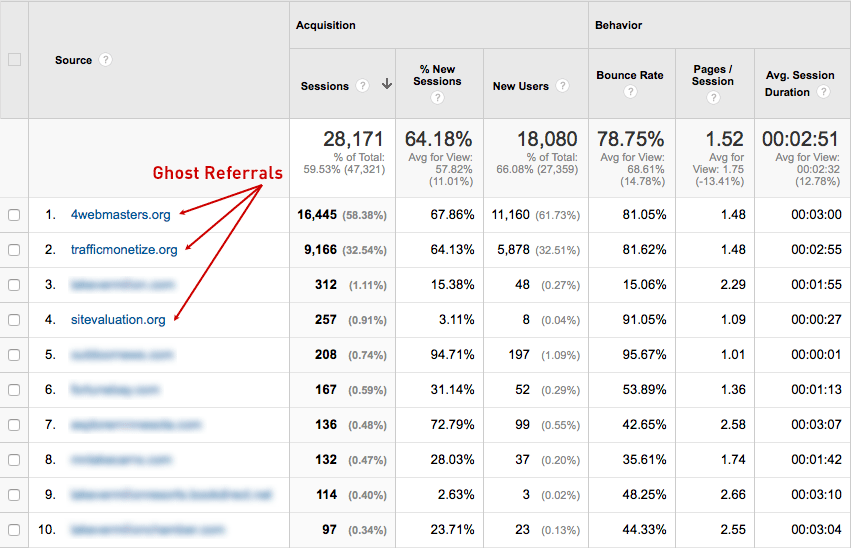

You can see the type of ghost referrals in the following picture:

Some other examples of Bot traffic are:

- webmonetizer.net

- www.Get-Free-Traffic-Now.com

- best-seo-solution.com

- forum.topic55627636.darodar.com

- buy-cheap-online.info

- addons.mozilla.org

- semalt.semalt.com

- acunetix-referrer.com

- thumbnail.ws

- flipboard.com

- urlopener.com

- wiki.vodia.com

- google.de

- Duckduckgo.com

Let’s Learn How To Filter These Bots Out

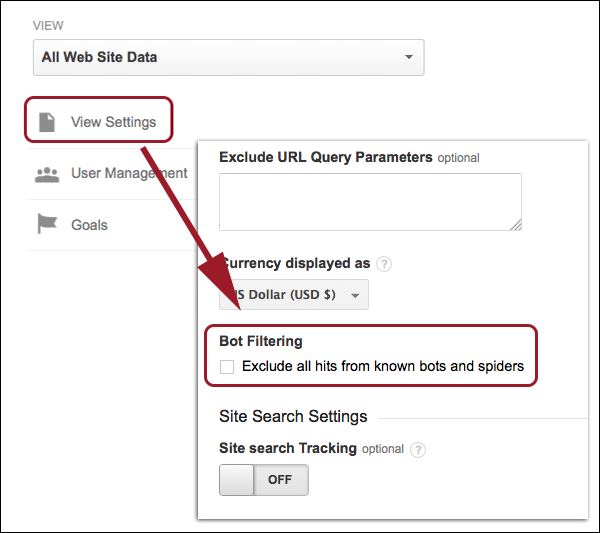

Begin with going to the admin panel on google analytics and click on View Settings. You will find the bot filter the bottom, check it and it will help you exclude all the hits that come from bots and hence making your analytics more accurate.

Conclusion

Make sure that you keep a regular review on your analytics report so that you can get accurate results of the analysis. Look for the spikes in the graph and check if its due to bots by our above-mentioned methods. Disregarding the anomalies in data can be highly detrimental for your business and your decisions will be based on skewed data.

Remember its always better to safe than sorry even when it comes to data.